Delta Air Lines

The latest Delta news, reviews, and strategies to maximize travel status. Earn more miles, points, and rewards with One Mile at a Time.

Delta Air Lines Reviews

- April 12, 2021

- Ben Schlappig

- January 27, 2020

- Ben Schlappig

- January 25, 2020

- Ben Schlappig

- January 9, 2020

- Ben Schlappig

- August 30, 2019

- Ben Schlappig

- February 6, 2024

- Ben Schlappig

Delta Air Lines Deals

- September 25, 2023

- Ben Schlappig

- July 25, 2023

- Ben Schlappig

- November 13, 2019

- Ben Schlappig

Delta Air Lines quick facts

Delta Air Lines offers the best overall domestic experience of the “Big Three” US carriers, primarily by being just a little bit better than United or American at all the basic functions of operating an airline. Delta has invested in comfortable new aircraft, refurbished their existing fleet with spiffy new interiors and in-flight entertainment, and is putting customers first by allowing them to pause their Medallion status during big life events, and trialing free Wi-Fi. Delta is becoming the leader in domestic travel, so it makes sense that you’d want to find the best credit card for earning Delta miles and other benefits.

Understanding the Delta SkyMiles loyalty program

Delta’s reward program, is called Delta SkyMiles, which is part of the SkyTeam Alliance. Be sure to check out our complete SkyMiles guides with everything you need to know in order to maximize your miles and elite status benefits.

The latest in Delta Air Lines News

- April 13, 2024

- Ben Schlappig

- April 11, 2024

- Ben Schlappig

- April 9, 2024

- Ben Schlappig

- March 27, 2024

- Ben Schlappig

- March 20, 2024

- Ben Schlappig

- March 14, 2024

- Ben Schlappig

- March 5, 2024

- Ben Schlappig

- March 2, 2024

- Ben Schlappig

Delta credit cards are issued exclusively by American Express, and there are seven different credit cards for consumers to choose from. That means there are a variety of Delta SkyMiles credit card offers out there, which is complicated by the fact that there are both personal and business versions.

Each of these cards has a separate welcome bonus, but you can typically expect to earn tens of thousands of bonus SkyMiles when you’re approved for a Delta credit card and meet the minimum spending requirements.

Delta Air Lines Insights

- April 12, 2024

- Ben Schlappig

- September 20, 2023

- Ben Schlappig

- July 30, 2023

- Ben Schlappig

TRAVEL REVIEW

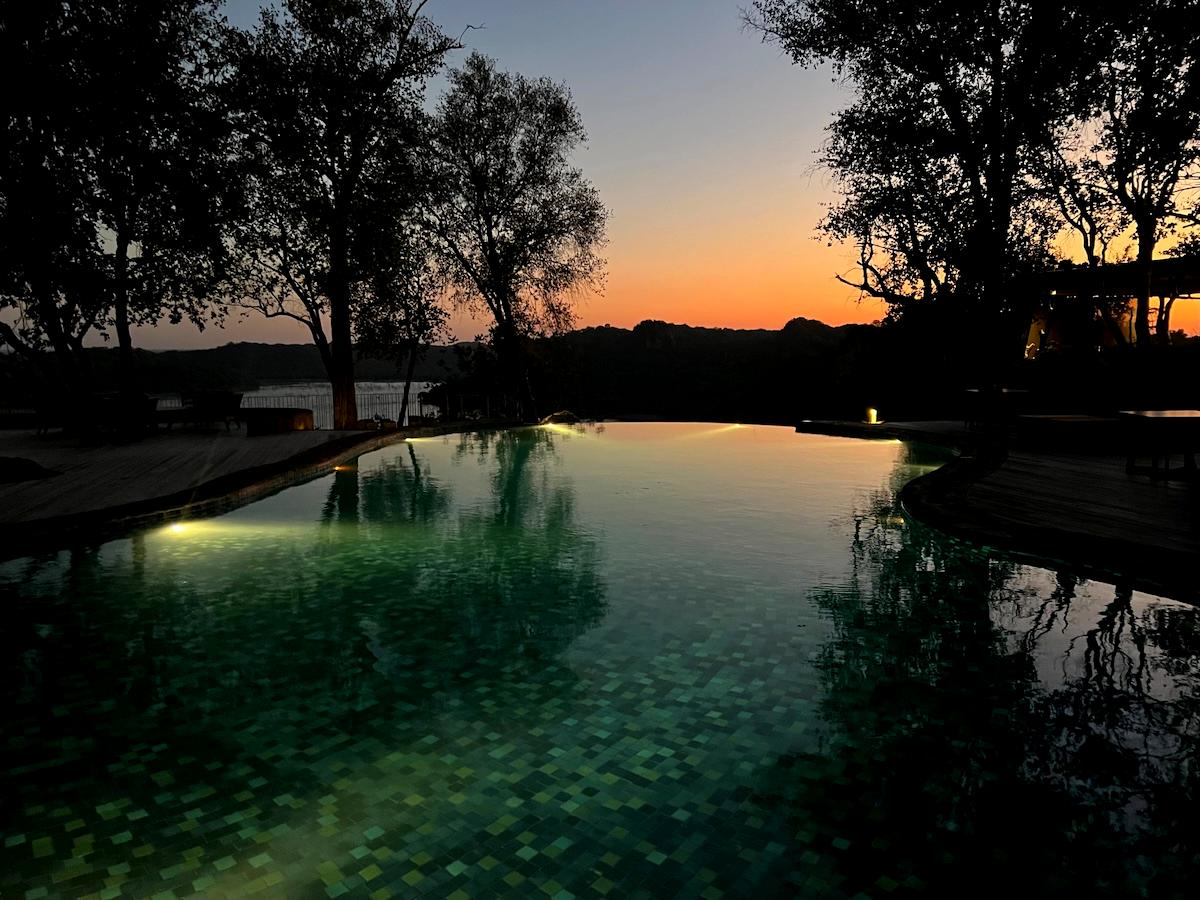

Review: Singita Pamushana Lodge, Zimbabwe

For our trip to Zimbabwe, our final destination was Singita Pamushana Lodge. Singita is known for offering some of Africa’s highest quality safari experiences. We took our first safari several years back, when we stayed at two of the brand’s South…

I’ve flown all of the major (and many minor) airlines, including every international first class cabin.

I write all my own content; no ghostwriters at OMAAT!

15 years (and counting) of daily blogging add up.